Storing Film Data During a Pandemic (and After)

The Film Locker team look the best ways of keeping your data safe during and after a global pandemic.

With many people in the film production world working from home these days, we’d like to cover the topic of how to store rushes effectively, safely and in a low carbon way, when your office resources are out of reach.

Here at Film Locker we’re all about best practice as well as low carbon, so when it comes to storing data on hard drives we’ve got strong opinions on what’s safe and what’s going to keep you awake at night!

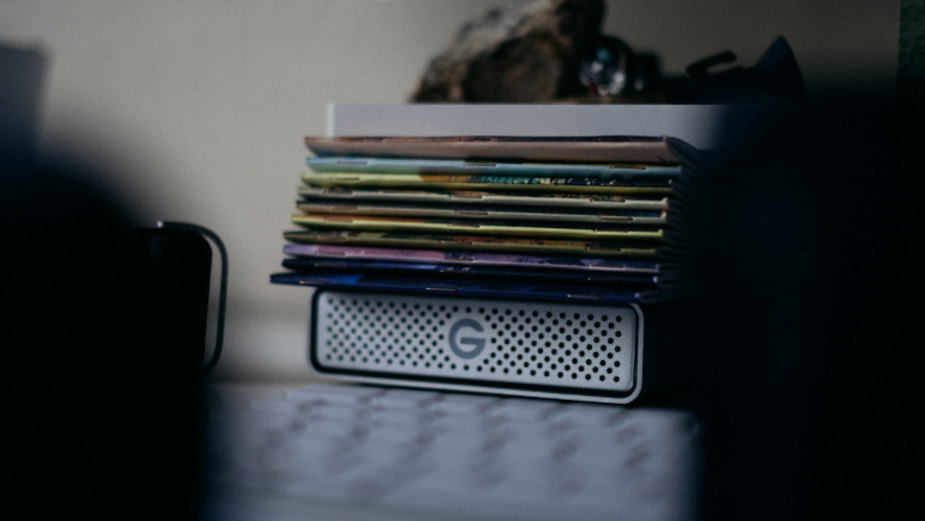

As we all know, using drives is an effective way to move data around in the post process. That’s totally fine, as long as when the project is finished you store those rushes and masters on another medium, and don’t continue to leave them on drives permanently. It’s common to hear that the rushes are on one lonely drive, stashed under someone’s desk, often uncatalogued or even labelled correctly.

Most people intuitively know that one copy on a drive is not ok – but do we really know why? Overall there is a 12% fail rate on drives if they’re not spun regularly. Also, ‘It’s safe under my desk’, might not be the safest place. The environment in which they need to be kept is very specific, and hard drives fail for the following reasons in order of most common; -

1. Being bashed or dropped, or even tipped over on its side, - one slip and the ‘platter is shattered’ (as they say), meaning the 0’s and 1’s can never be read again

2. Circuit board failure – can happen very very easily in a non-static controlled environment, or even one that has even a bit more moisture. Most offices or homes are not static/humidity controlled environments

3. Sticky armatures – a very common if drives are not spun for a period of time

4. Actual motor failure – the least common cause, but does occur

So, bu-bye data, and hasta la vista all those rushes you might need for re-cuts or versions, not to mention having to explain this to your client.

So apart from safety, what else is an issue? The actual ecological cost of using a drive once or twice when it’s meant to be used for up to five years is awful. It’s like buying a stainless-steel water bottle, using it once and then throwing it away. No one would do that! So why do we do this with hard drives? The actual carbon impact of that is explained in another post as it’s too much to cover here.

So, now we know we know the perils of single copy data on drives, what’s the solution?

For big data sets you want to keep for a long period of time, but don’t necessarily need to access every week or month, the best practice and lowest carbon option is linear tape open (LTO). Used world-wide, you can buy the kit and do this in house (not impossible but slightly complex) or use an industry approved supplier (hint – such as us). LTO is extremely low carbon, super safe and stable (30 guaranteed retrieval), and much cheaper than using hard drives for storage.

So, save yourself money and save the planet that carbon - use a more stable, sustainable approach to long term film digital data film storage. Please contact us if you need help or advice on this topic.

References

Backblaze - The Shocking Truth — Managing for Hard Drive Failure and Data Corruption, July 11, 2019 https://bit.ly/3ixbeIF

Photo by PJ Gal-Szabo