Digitally Resurrecting Kiyan Prince to Prevent Knife Crime

In May 2006, football prodigy Kiyan Prince tragically died after being stabbed while trying to prevent another child from being bullied. He was 15 years old. Now, using knowledge in cutting-edge technological art and AI, Framestore has worked with agency ENGINE creative to bring Kiyan back to life virtually to help raise awareness of knife crime.

‘Long Live the Prince’ sees Kiyan sign for his former football club, Queens Park Rangers, wearing the squad number ‘30’ to reflect the age he would have been today. In a world-first, Kiyan will also be introduced as a non-playable character in EA Sports FIFA21 – one of the world’s biggest video game franchises. Together with this, Kiyan will be a collectable footballer within the Match Attax card trading game which is being released by Topps on May 18th. Brands such as adidas and JD Sports will be sponsoring Kiyan as a pro-footballer and proceeds will raise money for the Kiyan Prince Foundation.

To find out how this emotional project pushes the boundaries of creative technology, LBB’s Alex Reeves spoke to Framestore’s Karl Woolley, global real-time director, and Johannes Saam, creative technologist.

LBB> What was the brief for Framestore here?

Karl & Johannes> We had been talking to ENGINE about the possibility of creating an adult Kiyan to put in EA’s FIFA for almost two years. In 2021 EA said yes, with a very strict deadline. Given that getting Kiyan into FIFA was at the core of the campaign, everything kicked off at this point. Just like a pro footballer, we wanted Kiyan to be able to secure sponsorship deals, which would generate revenue for the foundation.

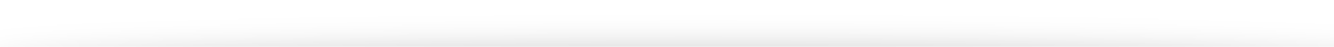

The initial brief was to create Kiyan’s photoreal likeness as a set of stills for ad campaigns. Those images would also be used by EA to build Kiyan’s likeness within their (bespoke) game engine for FIFA21. The deepfake component required for the film came about much later in the project.

LBB> It's obviously a heavy subject. Emotionally, what were the key considerations?

Karl & Johannes> As a parent, it was impossible to comprehend what Mark and his family went through when they lost Kiyan. We had the responsibility of giving the family a chance to see Kiyan again. Realising his likeness for a campaign of this scale was challenging, we are used to working under pressure but this project was unparalleled.

We focussed on science first, but then on the “feeling”. There was a moment in the initial development of Kiyan’s likeness where everything clicked and we could feel Kiyan in the image. It was still a sketch at that stage, but it was the first and only time we showed Mark prior to launch on May 18th. Mark had a visceral reaction to the image, which was the litmus test for the project.

LBB>B Why did you settle on the technological solution you went with?

Karl & Johannes> The solution was determined by time and budget. Chris Scalf is a well known expert likeness artist, he worked on the still images of what Kiyan would have looked like at 30. Once we were some way through the project there was an additional requirement to create a version of Kiyan for videos, that is when we started work on a machine learning likeness. Traditional VFX techniques of creating a digi-double would have taken too long and been too costly. Creating the asset the way we did meant it can be reused to create content for the foundation.

Initial likeness: Photoshop, Zbrush, Maya (inc. XGen) Credit: Chris Scalf

LBB> And what was the specific combination of techniques that were required to put Kiyan in game?

Karl & Johannes> EA has a proprietary system for creating the likenesses for footballers within the FIFA franchise. We worked with the EA team and their artists based on the 2D likeness that Chris Scalf created.

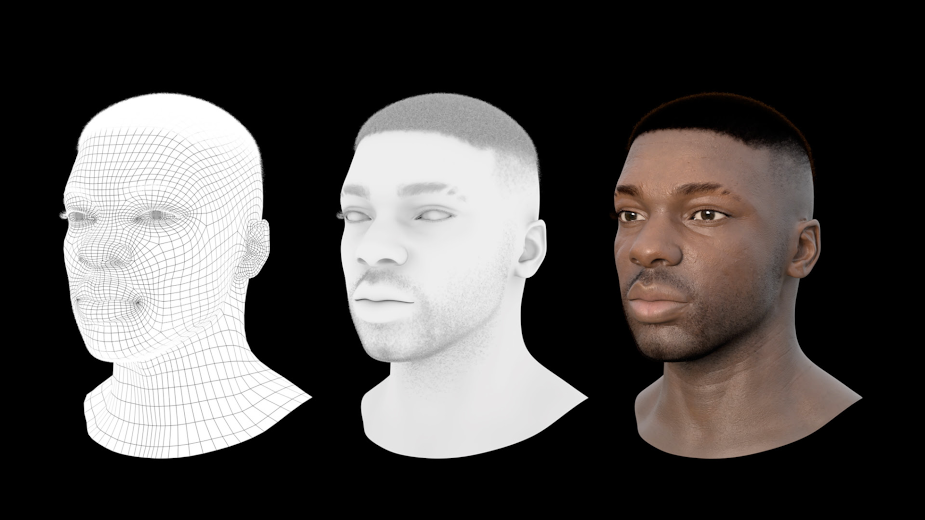

To bring the 2D static images to life for the video, we used a data-driven neural rendering pipeline. This technology is new territory for the VFX world, and we are very proud of the performance and the potential this new approach adds to our arsenal. We took the base sculpt and reference images, then generated a 3D CG model in high detail. We used Blender and a combination of neural rigging tools to prepare this geometry for animation. Rigging based on Apple's ARKit allowed us to motion capture a reference performance. Virtual Kiyan then got the right amount of facial hair inside Houdini. We then generated 6000+ reference images from all angles with a variety of facial shapes by putting our virtual Kiyan through a ‘Face Gym’ to generate different expressions. We used this dataset to enhance our pre-existing generic deep fake model. We took the photography as well as a real Face Gym of a double we shot on set. The double’s performance was then trained against the virtual Kiyan and refined over a week. Using this iterative process were able to ensure subtle emotional details were preserved or enhanced.

The Kiyan Project is a perfect example of a new wave of computer graphics. Small, decentralized teams using their vast experience, assisted by lots of powerful GPUs and neural networks. Agile and project-focused with minimisation of human time to deliver outstanding quality on a small budget.

Deepfake: Blender, Houdini, Zbrush, Nuke (inc. new CopyCat node) + some neural rendering "secret sauce"

LBB> Let's talk about the history of deepfake technology up to this point. What have been the major applications for it over recent years?

Karl & Johannes> Deepfakes started as a subreddit that exploited a bunch of interesting academic research. This group used advances in Autoencoders and later GANs to generate memes and celebrity deep fakes. They based their posts on the Face2Face papers from 2016-2017. Since then, due to its ease of use and an ever-growing online community, deepfakes have become relatively commonplace. One of the most well known was the 2018 deepfake created with the help of Jordan Peele, faking a speech of Barack Obama.

The technique is usually used to transfer the likeness of a source 'donor' to a target 'actor.' While it started in a relative niche market in the depth of the internet, the technology is now mainstream and shown its potential as well as its danger.

Open source movements, neural and data-driven projects will continue to challenge our definition of media. Neural architectures allow an individual or small team to achieve similar results to what is usually created by a large team of experts.

LBB> What makes this project such a departure from that use of deep fake?

Karl & Johannes> This project is particularly unusual as we generated a reference dataset using traditional techniques and then let the AI take over. This symbiosis of art, machine learning, and a meaningful and worthy cause are unique. Replacing a stunt performer with an actor is one thing, but recreating an emotional performance to raise awareness of knife crime is a meaningful application. We hope using deep fake this way will inspire more creative ideas.

LBB> What's the biggest implication of this in terms of the potential it promises for other projects?

Karl & Johannes> Approaching every challenge as a potential new opportunity for machine learning and other data-driven approaches will determine the future of content creation. This is true for many industries. We can’t avoid the artificial intelligence revolution. We hope that others will join us in the conversation and form a new wave of creators and creatives collaborating with machines to satisfy the ever-growing hunger for content.